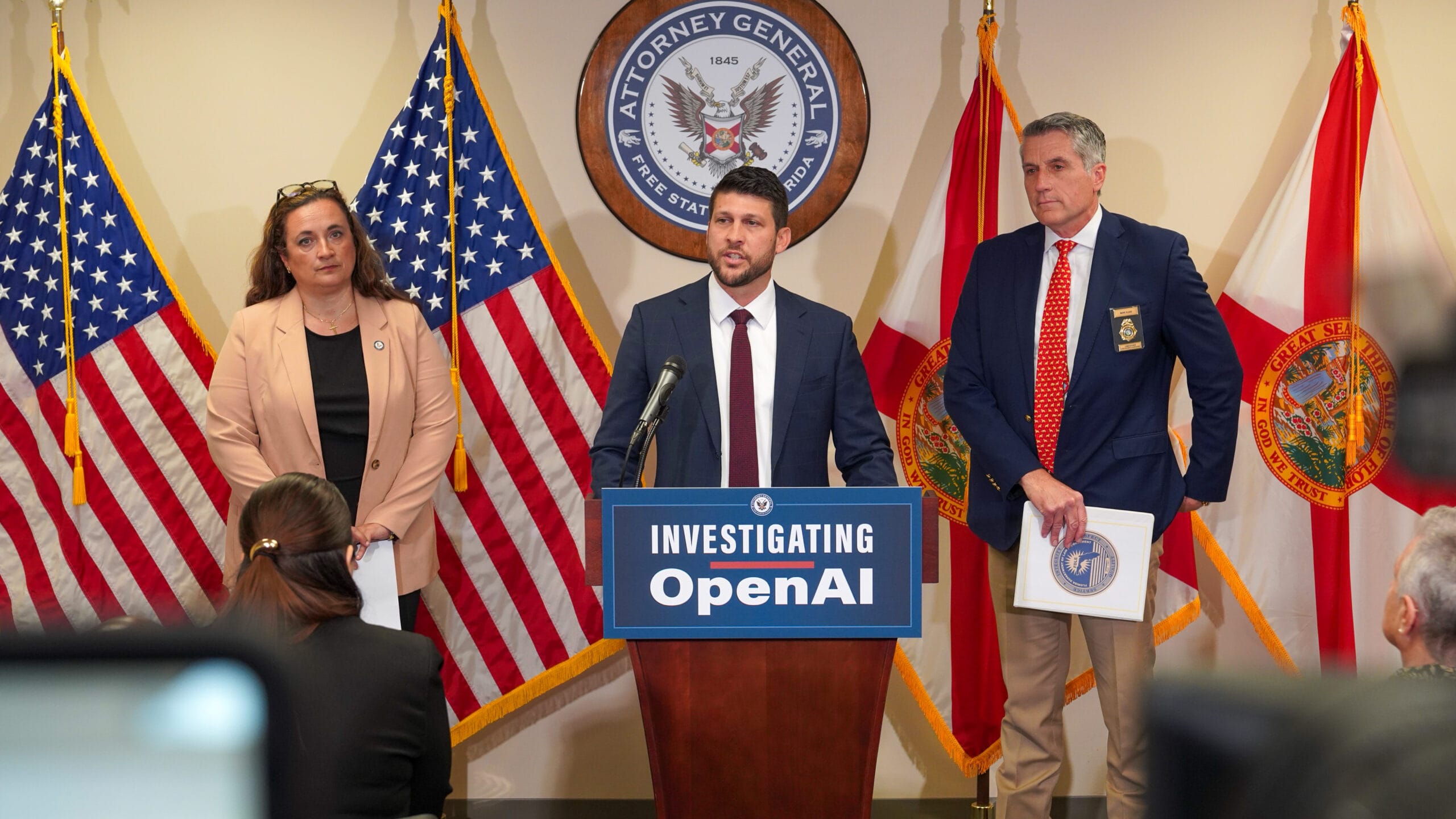

Florida Opens Unprecedented Criminal Investigation Into AI Company

Florida’s attorney general, James Uthmeier, has announced a criminal investigation into OpenAI following allegations that an individual accused of a deadly shooting at Florida State University used an AI chatbot prior to carrying out the attack. The case represents one of the first attempts by a state authority to explore potential criminal liability for an artificial intelligence company in connection with violent acts.

According to officials, early reviews of chat records suggest the suspect may have asked questions related to weapons, ammunition compatibility, and timing considerations. Prosecutors are now examining whether the responses provided by the AI system could meet legal thresholds for aiding or facilitating a crime.

The investigation includes subpoenas requesting internal documentation, including safety policies, training frameworks, and procedures for identifying and reporting harmful user behavior. Authorities are particularly focused on how AI systems handle conversations that may signal intent to commit violence and whether safeguards were adequate.

For broader legal context on AI governance and liability frameworks, similar regulatory discussions can be found at https://www.brookings.edu and https://www.rand.org, where experts analyze emerging risks tied to artificial intelligence deployment.

Legal and Ethical Questions Surround AI Accountability

The case raises complex legal questions about responsibility in the age of generative AI. Prosecutors have indicated that if a human had provided comparable guidance under similar circumstances, they could face serious criminal charges. Translating that standard to AI systems, however, presents new challenges.

Legal analysts note that AI platforms operate by generating responses based on large datasets of publicly available information. This creates ambiguity around intent, authorship, and foreseeability—key elements in criminal law. Determining whether an AI company can be held accountable may depend on whether it knowingly failed to prevent foreseeable misuse of its technology.

This issue intersects with ongoing debates around Section 230 protections and digital platform responsibility. Institutions such as https://www.lawfaremedia.org and https://www.eff.org have explored how liability frameworks may evolve as AI becomes more integrated into daily life.

Meanwhile, OpenAI has stated that its systems are designed to avoid promoting harmful or illegal behavior and that it continues to improve safeguards to detect risky interactions. The company has emphasized cooperation with law enforcement and ongoing investments in safety protocols.

Growing Global Scrutiny Over AI and Public Safety

The Florida investigation comes amid increasing global scrutiny of AI systems and their potential role in harmful incidents. Multiple legal actions have emerged in recent years, including cases involving violent attacks and claims related to mental health impacts.

In another high-profile case, authorities examined whether an AI platform had prior signals indicating dangerous user behavior but failed to escalate the issue. These developments are prompting companies to reassess how they monitor and respond to high-risk interactions.

Technology policy groups and international organizations, including https://www.oecd.org and https://www.unesco.org, are actively developing guidelines to address AI safety, transparency, and accountability. These frameworks aim to balance innovation with risk mitigation as adoption accelerates.

As the Florida case moves forward, it may establish important legal precedents for how governments regulate AI companies and define responsibility in cases involving real-world harm. The outcome could influence not only U.S. policy but also international approaches to managing the risks associated with rapidly advancing artificial intelligence systems.